by Yuan Liu, Zihan Chen, Shubdeep Mohapatra, Jim Furches, Zheng (Eddy) Zhang, Huiyang Zhou on Apr 20, 2026 | Tags: Quantum Computing

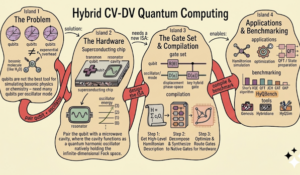

Hybrid continuous-discrete-variable (CV-DV) quantum computing combines oscillators and qubits to tackle problems that are difficult for either model alone, from bosonic simulation to quantum error correction. At ASPLOS 2026, our tutorial introduced the foundations,...

Read more...

by Karu Sankaralingam on Apr 10, 2026 | Tags: Architecture, Evaluation, Machine Learning

For decades, we have designed chips in fundamentally the same way: human intuition applied to a vanishingly small slice of an impossibly large design space. That paradigm worked when Moore’s Law was lifting everything. We could afford to be wrong. We could...

Read more...

by Adnan Rakin on Apr 6, 2026 | Tags: deep neural networks, Security, side-channels

Years ago, I came across three pioneering works (CSI-NN, Cache Telepathy, and DeepSniffer) in the field of reverse engineering neural networks that inspired my journey into side-channel attacks to uncover the secrets of modern Deep Neural Networks (DNNs). Fast forward...

Read more...

by Sai Srivatsa Bhamidipati on Mar 12, 2026 | Tags: Accelerators, deep neural networks, Machine Learning

The debate of sparsity versus quantization has made its rounds in the ML optimization community for many years. Now, with the Generative AI revolution, the debate is intensifying. While these might both seem like simple mathematical approximations to an AI researcher,...

Read more...

by Dmitry Ponomarev on Feb 3, 2026 | Tags: Blog, Editorial

As we close the book on 2025, Computer Architecture Today has seen another successful year of community engagement. We published 29 posts covering a wide spectrum of topics—from datacenter energy-efficiency to the evolving debate on LLMs in peer review, alongside trip...

Read more...

by Zhongming Yu and Jishen Zhao on Jan 20, 2026 | Tags: Agents, LLM, Memory Consistency, Memory Hierarchy

Large language model (LLM) agents are quickly moving from “single agent” to *multi-agent systems*: tool-using agents, planner-orchestrator, debate teams, specialized sub-agents that collaborate to solve tasks. At the same time, the *context* these agents must operate...

Read more...

by Mark D. Hill on Jan 12, 2026 | Tags: Accelerators, Memory, Modelling

TL;DR: Latency-tolerant architectures, e.g., GPUs, increasingly use memory/storage hierarchies, e.g., for KV Caches to speed Large-Language Model AI inference. To aid codesign of such workloads and architectures, we develop the simple PipeOrgan analytic model for...

Read more...

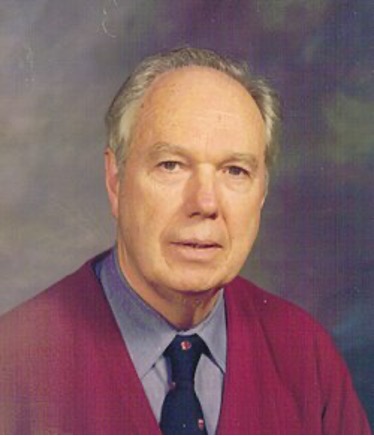

by Ruby B. Lee, Charlie Neuhauser, Timothy M. Pinkston on Jan 6, 2026 | Tags: Memoriam

Michael J. Flynn is a widely respected contributor—indeed a giant—in the field of Computer Architecture. He made highly significant and impactful contributions throughout his career, both in industry and in academia. Sadly, he passed away peacefully December 24,...

Read more...

by Dimitris Gizopoulos on Dec 8, 2025 | Tags: AI accelerator, Microprocessor, Modeling, Reliability, Simulators

Microarchitecture simulators have been conceived and implemented to be valuable tools for the design of computing chips of all types (SimpleScalar, gem5, SMTSIM, Sniper, Qflex, Scarab, GPGPU-sim, Accel-Sim, Multi2Sim, NaviSim, SCALE-sim, gem5-Salam, TAO, PyTorchSim –...

Read more...

by Zixuan Wang, Suyash Mahar, Luyi Li, Jangseon Park, Jinpyo Kim, Theodore Michailidis, Yue Pan, Mingyao Shen, Tajana Rosing, Dean Tullsen, Steven Swanson, Jishen Zhao on Dec 1, 2025 | Tags: AlphaFold, CXL, Fabric, Heterogeneous Systems, Memory, Profiling

This is the second article in the series, following our first blog in Dec 2023: Tuning the Symphony of Heterogeneous Memory Systems Modern applications are increasingly memory hungry. Applications like Large-Language Models (LLM), in-memory databases, and data...

Read more...