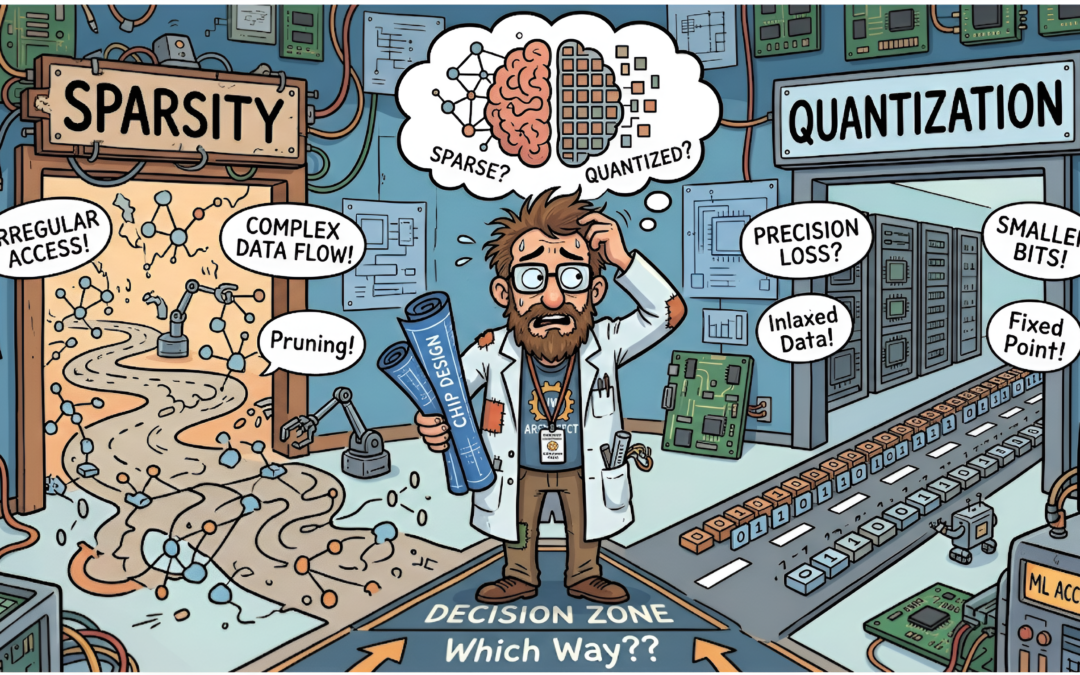

The debate of sparsity versus quantization has made its rounds in the ML optimization community for many years. Now, with the Generative AI revolution, the debate is intensifying. While these might both seem like simple mathematical approximations to an AI researcher, for a hardware architect, they present fundamentally different sets of challenges. Many architects in the AI hardware space are deeply familiar with watching the scale tip from one side to the other, constantly searching for a pragmatic balance. Let’s look at both techniques, unpack the architectural challenges they introduce, and explore whether a “best of both worlds” scenario is truly possible (Spoiler: It depends).

Note: We will only be looking at compute-bound workloads, which traditionally rely on dense compute units such as tensor cores or MXUs. We will set aside memory-bound workloads for now, as they introduce their own distinct set of tradeoffs for sparsity and quantization.

Sparsity

The core idea of sparsity is beautifully simple: if a neural network weight is zero (or close enough to it), just don’t do the math. Theoretically, pruning can save massive amounts of compute and memory bandwidth.

The Architecture Challenge: The Chaos of Unstructured Data

The golden goose of this approach is fine-grained, unstructured sparsity. It offers a high level of achievable compression through pruning, but results in a completely random distribution of zero elements. Traditional dense hardware hates this. Randomness leads to irregular memory accesses, unpredictable load balancing across cores, and terrible cache utilization. High-performance SIMD units end up starving while the memory controller plays hopscotch trying to fetch the next non-zero value. To architect around this, pioneering unstructured sparse accelerators—such as EIE and SCNN—had to rely heavily on complex routing logic, specialized crossbars, and deep queues just to keep the compute units fed, often trading compute area for routing overhead.

The Compromise: Structured and Coarse-Grained Sparsity

To tame this chaos, the industry shifted toward structured compromises. The universally embraced N:M sparsity (popularized by NVIDIA’s Ampere architecture) forces exactly N non-zero elements in every block of M. This provides a predictable load-balancing mechanism where the hardware can perfectly schedule memory fetches and compute.

More recently, to tackle the quadratic memory bottleneck of long-context LLMs, we’ve seen a surge in modern sparse attention mechanisms that leverage block sparsity. Techniques like Block-Sparse Attention and Routing Attention enforce sparsity at the chunk or tile level. Instead of picking individual tokens, they route computation to contiguous blocks of tokens, allowing standard dense matrix multiplication engines to skip entire chunks while maintaining high MXU utilizations and contiguous memory access. Other approaches, like StreamingLLM, evict older tokens entirely, retaining only local context and specific “heavy hitter” sink tokens.

The trade-off across these methods is clear: we exchange theoretical maximum efficiency for hardware-friendly predictability, paying a “tax” in metadata storage (index matrices), specialized multiplexing logic, and the persistent algorithmic risk of dropping contextually vital information.

Quantization

While sparsity aims to compute less, quantization aims to compute smaller. Shrinking datatypes from 32-bit floats (FP32) to INT8, or embracing emerging standards like the OCP Microscaling Formats (MX) Specification (such as MXFP8 E4M3 and E5M2), acts as an immediate multiplier for memory bandwidth and capacity. But the frontier has pushed much further than 8-bit. Recent advancements in extreme quantization, such as BitNet b1.58 (1-bit LLMs using ternary weights of {-1, 0, 1}) and 2-bit quantization schemes (like GPTQ or Quip), demonstrate that large language models can maintain remarkable accuracy even when weights are squeezed to their absolute theoretical limits.

The Architecture Challenge: The Tyranny of Metadata and Scaling Factors

From an architecture perspective, the challenge of extreme quantization isn’t just the math—it’s the metadata. To maintain accuracy at 4-bit, 2-bit, or sub-integer levels, algorithms demand fine-grained control, requiring per-channel, per-group, or even per-token dynamic scaling factors. Every time we shrink the primary datapath, the relative hardware overhead of managing these scaling factors skyrockets. Along with that, the quantization algorithm also becomes more fine grained, dynamic and complex. We are forced to add additional logic and even high-precision accumulators (often FP16 or FP32) just to handle the on-the-fly de-quantization and accumulation. We aggressively optimize the MAC (Multiply-Accumulate) units, only to trade that for the overhead of adding scaling factor handling and supporting a potentially new dynamic quantization scheme, which can outweigh the gains.

The Compromise: Algorithmic Offloading

To fix this without blowing up the complexity and area budget, the community relies on algorithmic co-design. Techniques like SmoothQuant effectively migrate the quantization difficulty offline, mathematically shifting the dynamic range from spiky, hard-to-predict activations into the statically known weights. Similarly, AWQ (Activation-aware Weight Quantization) identifies and protects a small fraction of “salient” weights to maintain accuracy without requiring complex, dynamic mixed-precision hardware pipelines. By absorbing the complexity into offline mathematics, these techniques allow the hardware to run mostly uniform, low-precision datatypes.

However, much like the routing tax in sparsity, this algorithmic offloading comes with some compromises. These methods heavily rely on static, offline calibration datasets. If a model encounters out-of-distribution data in production (a different language, an unusual coding syntax, or an unexpected prompt structure), the statically determined scaling factors can fail, leading to outlier clipping and catastrophic accuracy collapse. Furthermore, relying on offline preprocessing creates a rigid deployment pipeline that prevents the model from adapting to extreme activation spikes on the fly.

Is there a “best of both worlds”?

So, knowing these trade-offs, do we sparsify or do we quantize? Many years ago, the Deep Compression paper proved we could do both. But today, pulling this off at the scale of a 70-billion parameter LLM is incredibly difficult. It suffers from the classic hardware optimization catch-22 (see All in on Matmul?) : No one uses a new piece of hardware because it’s not supported by software, and it’s not supported by software because no one’s using it.

So what’s the path forward for hardware architects? In my opinion, the following:

- Deep Hardware-Software Co-design: The days of throwing a generic matrix-multiplication engine at a model are over. We need to work directly with AI researchers so that when they design a new pruning threshold or a novel sub-byte data type, the hardware already has a streamlined, fast path for the metadata.

- Generalized Compression Abstractions: Historically, we have designed accelerators that are either “good at sparsity” (with complex routing networks) or “good at quantization” (with mixed-precision MACs). Moving forward, we need to view these not as orthogonal features, but as a unified spectrum of compression. Architectures must be designed to dynamically adapt—perhaps fluidly dropping structurally sparse blocks during a memory-bound decode phase, while leaning on extreme sub-byte quantization during a compute-heavy prefill phase—potentially even sharing the same underlying logic.

- Balance Efficiency and Programmability: As explored in the “All in on MatMul?” post, we need to keep our hardware flexible. Over-fitting to today’s specific sparsity pattern or quantization trick risks building being trapped in the local minimum. We must maintain enough programmability to enable future algorithm discovery and break free from the catch-22.

Some notable research going along this path include Effective interplay between sparsity and quantization, which proves the non-orthogonality of the two techniques and explores the interplay between them and also the Compression Trinity work which takes a look at multiple techniques across sparsity, quantization and low rank approximation and tries to take a holistic view of the optimization space across the stack.

Ultimately, as alluded to before, there is no single silver bullet, and like all open architecture problems, the answer is always “it depends”. But in the era of Generative AI, it depends on whether we view sparsity and quantization as competing alternatives or as pieces of the same puzzle. Perhaps it’s time we stop asking which one is better, and start designing architectures flexible enough to embrace the realities of both.

About the Author:

Sai Srivatsa Bhamidipati is a Senior Silicon Architect at Google working on the Google Tensor TPU in the Pixel phones. His primary focus is on efficient and scalable compute for Generative AI on the Tensor TPU.

Authors’ Disclaimer:

Portions of this post were edited with the assistance of AI models. Some references, notes and images were also compiled using AI tools. The content represents the opinions of the authors and does not necessarily represent the views, policies, or positions of Google or its affiliates.

Disclaimer: These posts are written by individual contributors to share their thoughts on the Computer Architecture Today blog for the benefit of the community. Any views or opinions represented in this blog are personal, belong solely to the blog author and do not represent those of ACM SIGARCH or its parent organization, ACM.